Testing Methodologies: From Requirements to Deployment

Summarize with AI:

Wrap your head around the various testing methodologies, at what point to implement them and what each methodology tests.

Before you can deploy your application, you must prove that it works as intended and is free from defects. That proof is going to require a test plan that integrates several testing methodologies and leverages a variety of tests, each of which proves some aspect of your application is ready for deployment. If you’re looking for testing tools, you’ll want a tool (like Test Studio) that supports as many of these testing methodologies as possible.

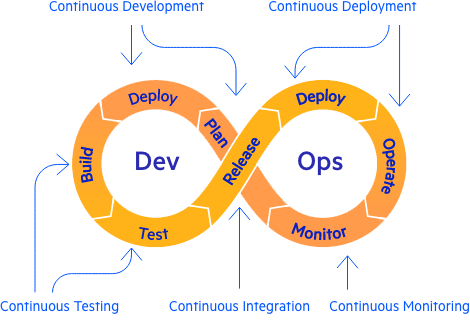

The Testing Life Cycle

You can divide testing into eight stages/testing methodologies:

Defining

Requirements review: Proves your requirements are ready to be used

Usability tests: Prove users can achieve their goals with the application

Building

Unit tests: Prove each component works as intended

Integration tests: Prove a subsystem of tested components works together

End-to-end (E2E) tests (also called system tests): Prove an entire business process that depends on multiple tested subsystems works

Deploying

User acceptance tests (UAT): Prove users can complete business tasks; signal the application is ready to be deployed

Smoke test (also called sanity check): Proves the application starts after deployment

In addition to these test phases with their testing methodologies, you also have #8. Regression testing, which proves that older tests still succeed after changes have been made. Regression testing is special because it not only proves your application still works the way you thought it did, but your tests do, too (your tests aren’t raising “false positives” by failing where there isn’t a problem, for example).

Regression testing begins with the requirements phase, continues as long as you’re making changes and involves both developers and QA (but not users). Regression testing is your best protection against software failure but, typically, is only economically possible if your tests are automated (automating testing also ensures consistency, which is essential because it ensures you’re running the same test you ran originally).

As you’ll see, this list is customizable. Not all teams, for example, will include UAT for every deployment—some teams will use UAT in earlier stages as part of having users approve further work. Many teams will include smoke tests in the integration, E2E and UAT stages in addition to deployment. It’s important you use the testing methodologies best suited for your application at the appropriate time.

Testing Methodologies for Getting Requirements Right

Requirements define the “right” answer for your tests and, in an Agile environment, contribute to “the definition of done.” Some requirements-gathering processes even include “testability” in the definition of a requirement (user stories, for instance).

Requirements can be tested through a requirements review (also called validating your requirements). The goal is to prove the requirements are consistent, complete and understood by all parties in the same way. It involves at least users and developers, and often surfaces unstated assumptions those two groups have. Including Quality Assurance (QA) at this early stage facilitates testing in later stages. This stage is shorter with Agile teams because, by working together on a product over time, Agile teams effectively end up practicing “continuous validation.”

If the application has a frontend or outputs that users will be interacting with, developers and users can define those interactions through usability tests using mock-ups, paper storyboards or whiteboards. Usability tests also help refine user requirements by expressing requirements in a form that makes sense to users: the application’s UI.

Defining: Functional and Non-Functional Requirements

Not all requirements come from the application’s users. Many requirements—like reliability, response times and accessibility—apply to multiple applications (though these requirements may also be set or modified at the project level).

As the number of requirements increases, it can make sense to divide them into two groups: functional and non-functional requirements. A functional requirement is one that specifies the application’s business functionality (“When an employee ID is entered, the timesheet for that employee will be displayed”). A non-functional requirement specifies how well the application achieves the functional requirements (“Average response time will be under three seconds”).

The border between two categories has moved over time—these days, security and privacy requirements are more likely to be classified as functional rather than non-functional requirements, for example. As a result, some teams will classify a requirement like “An employee will not be able to view another employee’s timesheet” as a non-functional requirement while other teams will list it as a functional requirement. But, regardless of how a requirement is classified, it needs to be addressed during testing.

Most non-functional requirements are tested in the integration, E2E and UAT stages. However, moving any test to earlier in the process—shift-left design thinking—will uncover problems when they’re cheaper to fix. For instance, rather than waiting until integration testing, you can have developers prove in the unit test phase that suspicious inputs will be rejected or that the user will only be permitted to perform authorized activities.

Non-functional testing can also be included after UAT in the smoke test. Smoke tests usually prove that at least one user can log on (which proves that authentication works), for example, and often check for excessive response times.

Testing Methodologies for Building: Kinds of Tests

The unit test stage focuses on the smallest testable components, working in isolation (tools like JustMock allow you to isolate individual components of your application for testing). Later test stages (integration, E2E and UAT) involve more components working together and, as the number of components increases, so does the variety of tests that can be included:

Stress tests: Prove that an application will perform under expected loads

API testing: Proves that an application can interact with other applications

Compatibility testing: Proves an application works as specified on a list of targeted platforms

Security tests: Prove that only authenticated and authorized users can access system functionality (and only if their actions don’t look suspicious)

Each kind of test proves something different about your application and each has its own criteria for success. Compatibility testing (including cross browser testing) can even have different criteria for different test runs. What counts as “success” when your application runs on a device with a 27” screen may be different than when your application runs on a device with a 6.5” screen, precisely because you expect your responsive application to adjust its UI by limiting functionality on the smaller screen.

Exploratory testing will also be performed during the integration, E2E and UAT stages. Exploratory testing encourages individual testers (who may be developers, users or QA) to investigate an application to discover defects not targeted in the test plan (at least, not yet). Exploratory testing is a process of simultaneously designing and executing tests to potentially discover defects.

Based on what you want to prove about your application, you’ll determine which of these kinds of tests you need, what counts as “success” for each kind of test, and how early you’ll use each kind of test. API testing, for instance, is often done at the integration stage to prove that two services can communicate with each other through web calls, queues or events. However, API testing can also be done as early as the unit testing stage to let developers build out an application with the confidence that interfaces with other services have been proved to work correctly.

Testing Methodologies for Building: Shifting Audiences and Perspectives

At the unit testing stage, only the developer is typically involved. As testing proceeds to later stages, end users become more involved in validating tests (taking over completely at UAT). Using tools like test recorders and keyword testing lets users become even more involved in the integration, E2E and UAT stages. Tests also typically become more complex during these stages, so the role of QA—as dedicated managers of the testing process—increases.

Unit tests, because they’re typically built by the developer for their own code, usually take a white-box testing perspective, which leverages the developer’s knowledge of the internal workings of components to drive test design. The goal in white-box testing at the unit testing stage is to prove that the component does the right thing in the way it was intended to work.

As you might expect, white-box testing is contrasted with black-box testing, which ignores how the code does its job to focus on whether the component meets requirements. You need both types of testing. Relying only on black-box testing, for example, can mean you can have code that executes in a way that you don’t understand. That code will inevitably blow up (and in a surprising way) when conditions change.

As testing proceeds from unit testing to integration testing and the number of people involved increases, there is a progression from white-box to black-box testing. The components chosen for an integration test are picked because they’re known to work together in a specific way, for instance, which is a white-box perspective. However, in integration testing, validation tends to focus on “Did we get the right output?” (the assumption being that it was only possible to get the right answer if the components were working together “as intended”)—a black-box approach.

E2E testing, UAT and smoke tests tend to have an almost entirely black-box approach, so the goal of those stages is proving the application meets the requirements (both functional and non-functional). Having QA involved in requirement reviews pays off here because it gives QA a better understanding of what counts as a successful test at these stages.

Testing Methodologies for Deploying

UAT involves end users who have the authority to sign off on deploying the application. The goal here is to prove that the ultimate stakeholder is comfortable with the changes about to be deployed. QA and developers are involved, but primarily in setting up the test environment and recording any problems that are discovered.

Smoke testing can be entirely automated and may not involve developers, end users or QA—if a problem starting the application is detected, the deployment can be automatically rolled back. As part of a smoke test, though, some teams have a user log in to the application, with a QA person or developer within reach to report any problems to. Other teams use exploratory testing during the smoke test to check for post-deployment defects.

Managing Testing

If you’re thinking that’s a lot of testing methodologies, and more than you have time or budget for … well, you’re probably right. You’ll need customize this process for your application. You’ll only want to apply tests where the risk warrants testing, and you’ll omit tests where the risk of failure is low (or where possible failures can be easily mitigated). You may know enough about the demand on your application and your IT infrastructure that stress testing doesn’t make sense, for instance.

If, however, you need to do more testing than your resources allow (or just want to free up testing resources to work on adding functionality) then your first step is to look for redundancy among your tests (making sure you don’t have two tests that prove the same thing). Resequencing the order of tests can sometimes reduce the time a test takes by running against smaller surface areas—for example, can a test that involves only a single API be moved out of integration testing and into unit testing? Working in sprints also helps by reducing the surface area that requires testing.

You should also be looking for tools and techniques that will reduce your testing effort (without reducing its effectiveness) or improve test effectiveness (without increasing the testing effort). Automating frequent and repetitive tests (using tools like Test Studio) not only enables regression testing, it also enables you to test in more environments, making your testing more effective.

Techniques that spread the workload around are also worth looking at. For example, incorporating the tools that let users write E2E tests not only automates more tests and distributes the workload but also improves the effectiveness of the tests.

And here’s one final tip (and a last piece of terminology): Unless you’re trying to reduce your code base by removing irrelevant code, don’t worry about the number of lines of code executed during testing (coverage). If your code has passed all your tests, then your code has passed all your tests—you have proven everything that you wanted to prove about your application. It’s ready to be deployed.

| Testing Methodology/Stage | Goal | Black Box/White Box | Audience |

|---|---|---|---|

| Defining | |||

| Requirements review | Your requirements are correct, complete, and understood in the same way by everyone | N/A | Users, Developers, QA |

| Usability tests | Users can achieve their goals with the application | Black box | Users, Developers |

| Building | |||

| Unit tests | Each component works according to in the way it was intended to | White box | Developers |

| Integration tests | A subsystem of tested components work together | Mixed | Developers, Users, QA |

| End-to-end (E2E) tests | An entire business process that depends on multiple subsystems completes | Black box | Developers, Users, QA |

| Deploying | |||

| User Acceptance Tests (UAT) | Users can complete business tasks; Signals the application is ready to be deployed | Black box | Users (QA, Developers in support roles) |

| Smoke Test | The application starts after deployment | Black box | Potentially fully automated |

| Requirements to E2E | |||

| Regression Testing | Proves all tests still succeed | Black box | Developers, QA |

Peter Vogel

Peter Vogel is both the author of the Coding Azure series and the instructor for Coding Azure in the Classroom. Peter’s company provides full-stack development from UX design through object modeling to database design. Peter holds multiple certifications in Azure administration, architecture, development and security and is a Microsoft Certified Trainer.