Test Flakiness Demystified

Summarize with AI:

Flaky tests add technical debt to mission-critical deployment workflows. Test Studio introduces simplified test debugging to help deal with test flakiness.

Test automation is a key productivity driver. Yet, implementing it doesn’t come easy. Flaky tests and inefficient troubleshooting not only add technical debt to mission-critical deployment workflows, but compromise the success of your automation efforts. Test Studio introduces simplified test debugging with new intelligent suggestions to help deal with test flakiness.

When software products grow, the need of automated testing increases because it saves time and money. On the other hand, test automation is a continuous effort, with creating automated tests being only one aspect of the job. Even though test cases have been carefully crafted, test failures may occur.

Why Automated Tests Fail

There are a few reasons why a test case may fail, so let’s analyze a bit further and differentiate those reasons:

- Negative test case – This is a test case that is expected to fail. Negative testing ensures your application can handle invalid input, for example if the user types a letter in a numeric field.

- Test case that reveals a bug – This is a successful test case which serves one of the main goals of the software testing—identifying defects.

- Test flakiness – A flaky test case is a test that fails periodically or consistently for a reason unrelated to the functionality of the application under test.

Why You Need To Deal With Flaky Tests

Test flakiness is such a common problem that it impacts all teams, big or small.

As already mentioned, a flaky test is a test that behaves differently without any code/software changes. Besides the fact that flaky tests can be quite costly since they may require a lot of maintenance, the real cost of test flakiness is the lack of confidence in your automated testing suite.

When a test case fails, our first job is to determine whether that happened because of a defect or because of an issue within the test case.

What’s New in Test Studio R2 2021?

Luckily, the latest Test Studio R2 2021 release ships with new simplified test debugging features that can significantly reduce the overall time spent on test maintenance and help users effortlessly identify the reasons behind test flakiness.

Step Failure Details

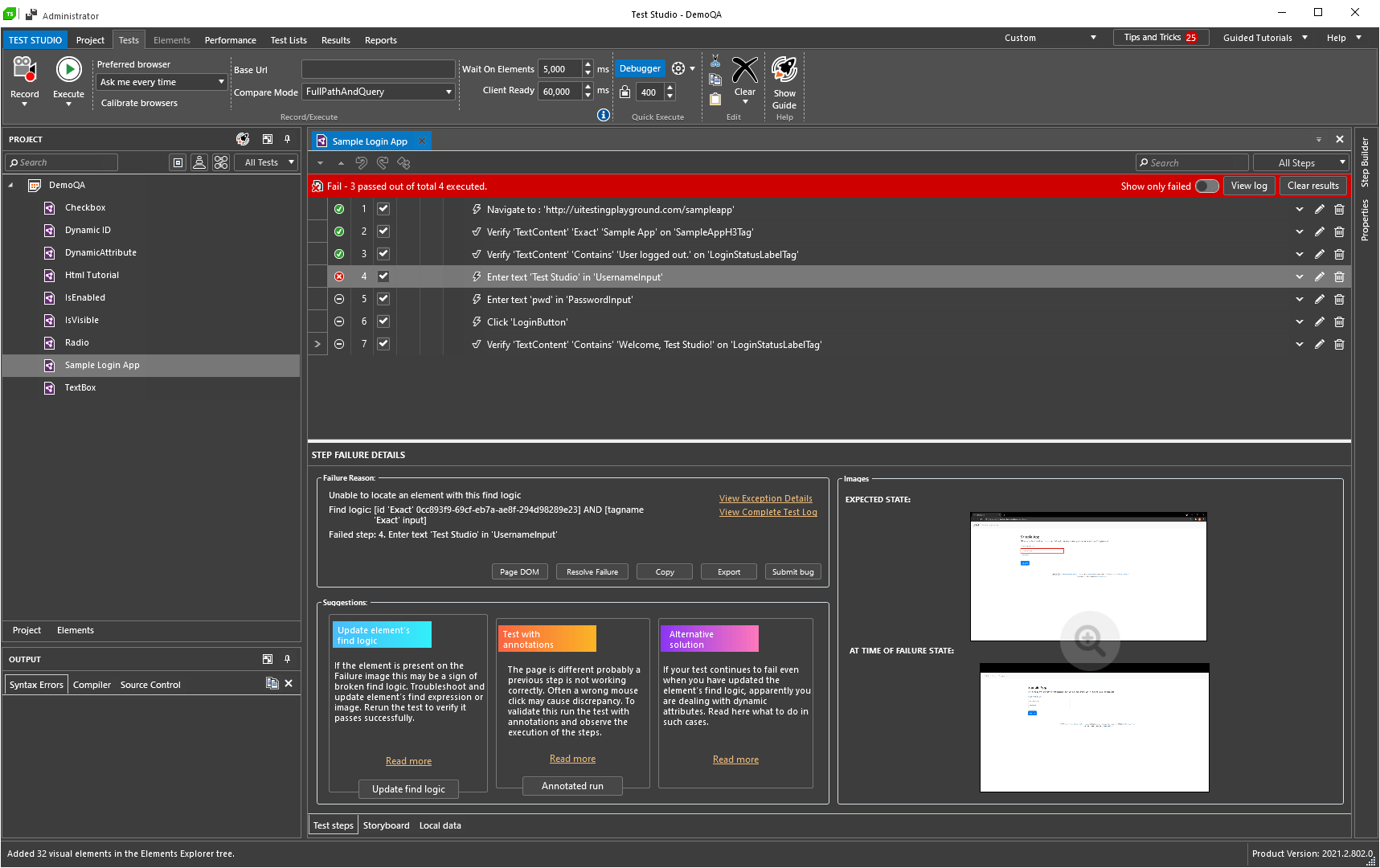

When experiencing a test failure, the new fully functional Step Failure Details window in Test Studio provides comprehensive information about why and how the test failed as well as screenshots of the expected and actual application state.

The significant improvement here is the brand new intelligent suggestions that guide the user in how to fix the issue. Each suggestion is tailored specifically to the test failure exception to make sure Test Studio suggests the most relevant course of action.

Causes of Test Flakiness

The reasons for test flakiness can be numerous. Below I will outline a few of the most common ones automation engineers are facing:

Timing Issues

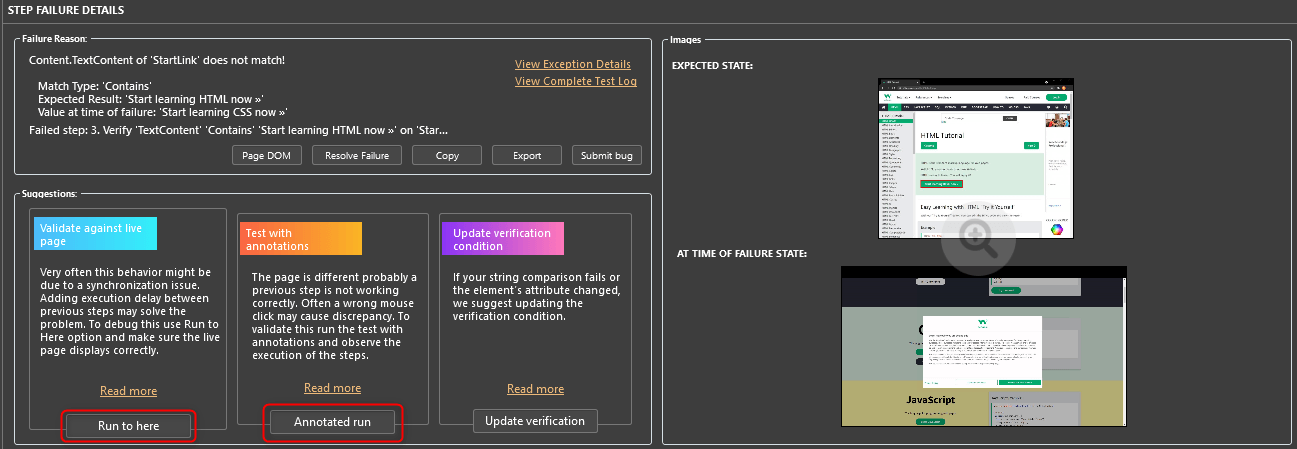

When the application state is not consistent between test runs or the expected result is not returned fast enough, a test failure may occur. Such scenario may require adding a wait step so it doesn’t fail inexpediently.

In this case Test Studio offers to take the appropriate actions to fix the test execution. Each suggestion has actionable buttons guiding the user through the entire process.

More details on how to troubleshoot a failing test related to timing issues may be read in the following guides: Run to here and Annotated run.

Fragile Find Expressions for Locating Elements

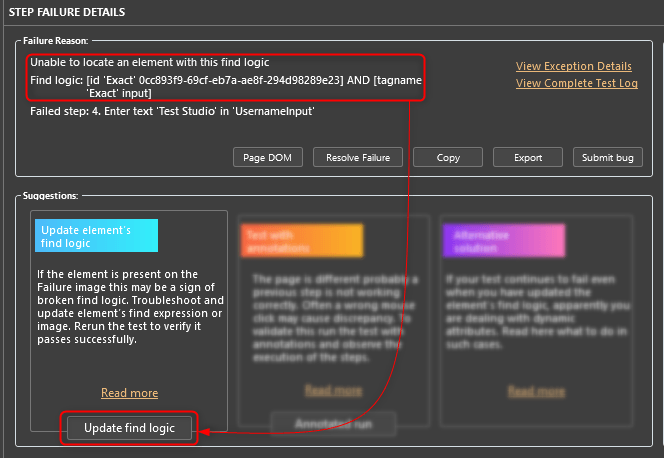

Modern applications often generate dynamic attributes for elements such as IDs. In such cases, the ID is not a reliable attribute to be used in a find expression. By default, many UI automation tools record IDs in the element’s primary find logic. Test Studio can be instructed to skip dynamic IDs.

In cases when the test failure is related to an “Element Not Found” exception, Test Studio’s debugging feature offers to update the element’s find logic:

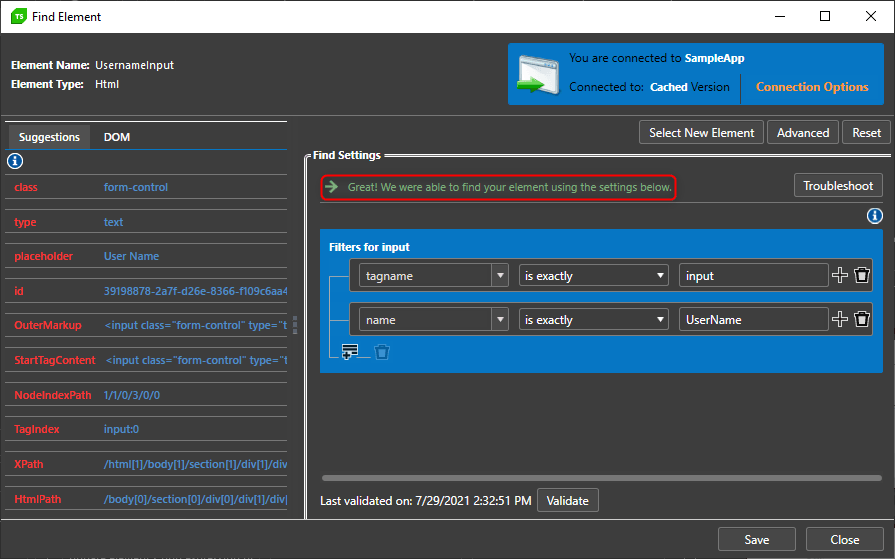

The actionable button “Update find logic” opens the Find Element menu where the user can tweak the problematic find expression, validate the new find expression and save the changes.

Check out the detailed documentation on how to handle elements with dynamic attributes.

Some other reasons for flaky automated tests are but not limited to:

- Concurrency – There may be data races, deadlocks or concurrency issues in application code or in the tests themselves.

- Test Order Dependency – A test may fail because of the tests that are running before or after it. A good test should be isolated and should set up the state it depends on explicitly.

Conclusion

Test flakiness is a common problem across every engineering organization, and unfortunately flaky tests will never disappear in full. However, when taken into consideration, they can be cut to a minimum, which will impact the overall health of your automated test suite. By using Test Studio’s new debugging feature, testers can take the right actions to reduce inefficient test troubleshooting and avoid tremendous test maintenance in the long term.

Ivaylo Todorov

Ivaylo is a QA Engineer on the Progress Test Studio team, where he helps build a better and more reliable product. Before starting as a QA Engineer, Ivaylo spent three years as a Technical Support Engineer in Test Studio doing his best to guide customers in their automation endeavours. Ivaylo enjoys skiing, cooking and portrait photography.