Building Faster Backends in ASP.NET Core

Summarize with AI:

While web app performance tuning can be a complicated beast, there are several common sense techniques developers can use to streamline the processes on the backend server. Let's take a look at some popular performance tips and tools of the trade.

Web application performance is often ignored until it's too late. If developers are not careful, small inefficiencies can pile up leading to a slow clunky web app. To avoid this fate, the key is consistency - and always staying cognizant of performance bottlenecks. Many small improvements over time are cheaper and easier than trying to cram them in at the end of the project.

We recently looked at how to build faster frontends in ASP.NET Core. Now let's take a look at the other half of the equation - the web server backend. Optimizing your backend performance is different than optimizing your frontend web app. Most frontend performance improvements focus on the size of requests going over the wire. Backend performance, on the other hand, is more focused on getting data efficiently and controlling responses. This article takes a deep dive into backend performance tuning - we take a look at some tools of the trade and common techniques that can help.

Measuring Speed

If you want to improve the performance of your web application, you must first measure it. Measuring performance can be tricky business. Variations caused by other processes in the pipeline can make exact performance numbers hard to obtain. Developers need to know never to trust a single performance number. If you run the same operation under the same conditions a few times, you'll get a range that's good enough to optimize. Additionally, there are certain performance issues that you can only catch while running the application under user load.

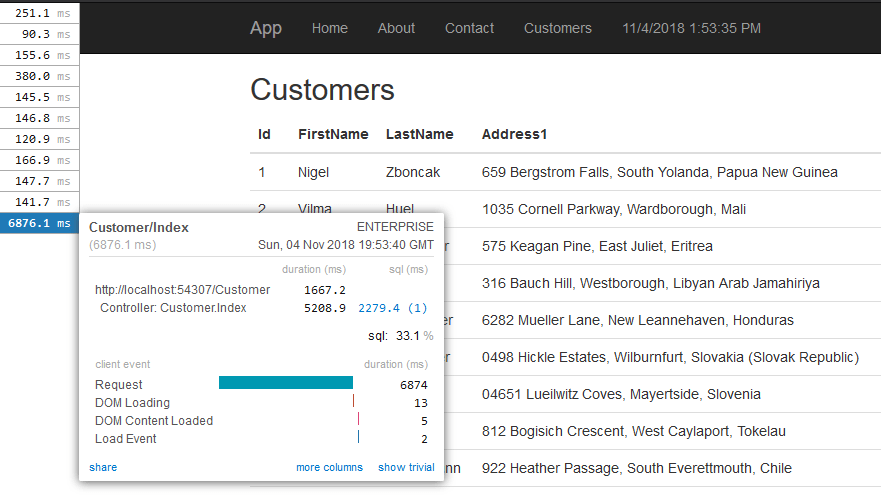

To measure performance, developers would likely want to use two different types of tools. First would be a profiling tool to measure individual performance. There are many tools that can do this, but one of the most popular ones is MiniProfiler. MiniProfiler is simply a NuGet package you can pull in to your web application project. It measures the performance of different processes and displays the information while you run your application. Times are stored unobtrusively on the top right corner. You can expand them to get a detailed analysis of the time it takes for different processes to run on your machine. Here's an example of the output from MiniProfiler:

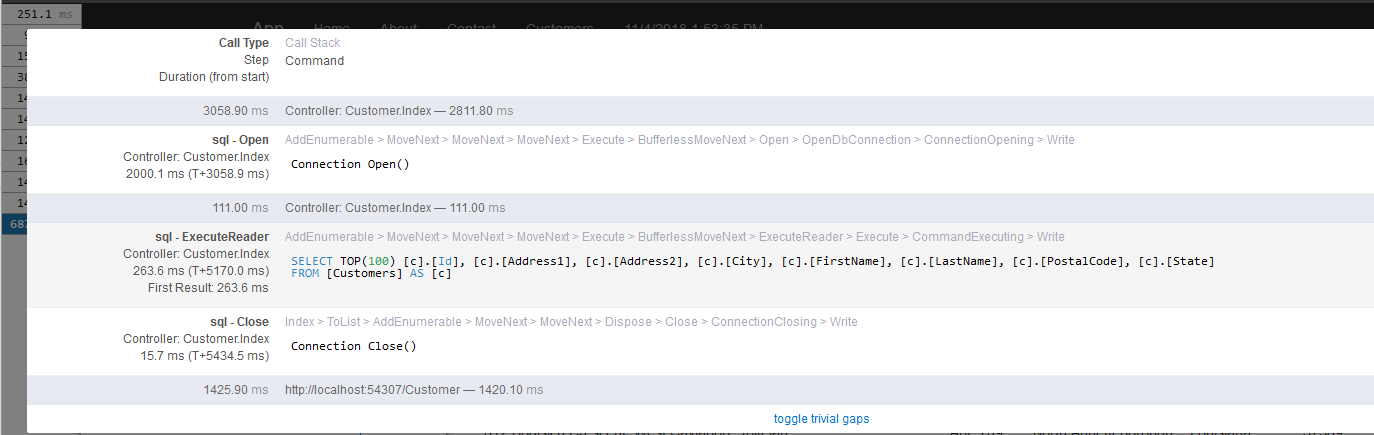

MiniProfiler will also profile SQL queries from Entity Framework - this makes it easy to find long running queries. MiniProfiler will also flag duplicate SQL queries. Here's an example:

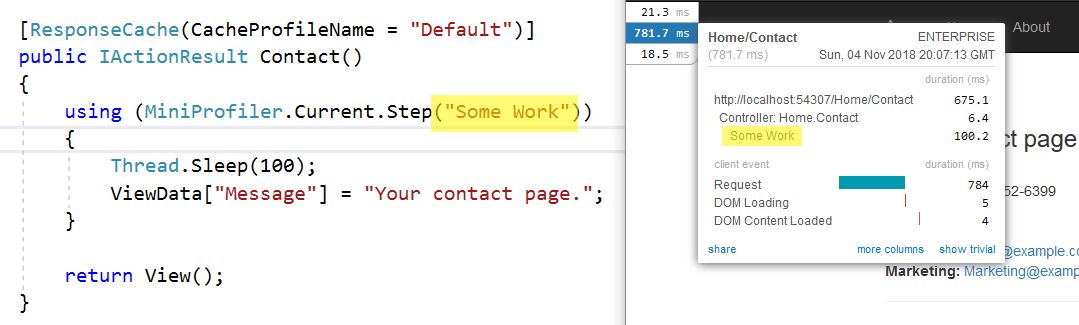

You can also add custom profiling steps to your web application. If you have a long running process, you can break it down into steps and track how long each one takes. In the code below, we're adding a custom profiling step to the method:

MiniProfiler is a great tool for testing the performance of an application while you're using it, but it lacks the ability to get aggregate performance data. For this, you'll likely need an application performance monitoring (APM) tool. Application Insights and New Relic are popular, but there are lots of options. These tools will measure the overall performance of your web app, determining the average response time and flagging down slow responses for you.

Once you have your measurement tools in place, you can move onto fixing problems. When fixing performance issues, developers should take measurements before and after each intervention. Also, it is advisable to change only one thing at a time - if you change several factors and re-test, you won't know which (if any) of those interventions worked. Improving application performance is all about running different experiments. Measuring performance will tell you which experiments worked.

Another handy utility for measuring performance is Telerik Test Studio – a test automation solution liked by developers & QAs. While the primary goal is automating UI tests for web/desktop/mobile, Test Studio is also quite helpful in load and performance testing. Developers can capture HTTP(S) traffic and customize requests to gauge impact on performance, and recorded Test Studio web tests can be reused for load testing. The bottom line is, developers should use all available tools at their disposal to measure speed and see how performance tuning can help.

Performance Tips

There are a lot of performance tuning techniques - the best ones are based on real production applications. Performance tips cannot be applied blindly though - developers need to measure the interventions and make sure they work for the given app. Just because something worked in one app, it doesn't mean it'll be good for another application. Let's do a quick run down of some common performance tuning techniques for your backend app server.

Dealing with Databases

Data is pivotal for any app, but databases can also be the most common performance bottleneck. Your database queries need to be efficient - period. Using your favorite profiler, find the offending slow-running query and try running it directly against the database. Developers can also use SQL Server Management Studio and check out the execution plan, if using SQL Server as a database. If using relational databases, you should make sure that the fields you are searching on are hitting the appropriate indexes - if need be, one can add any necessary indexes and try again. If your database query is complex, you can try simplifying it or splitting it up into pieces.

If optimizing SQL queries is not your forte, there's a shortcut. In SQL Server Management studio, go to Tools > Database Tuning Engine Advisor. Run your query through the tool and it'll give you suggestions on how to make it run faster.

Get the Right Stuff

What your web app shows on a given screen should have an exact correlation to what you fetch from your data tier - one should not grab any unnecessary data. Developers need to be wary of reusing expensive item retrievals to grab whole graphs of related objects for small grids.

Another thing to consider is that your users probably aren't going to read 10,000 items you pushed out to client side - at least, not at the same time. Developers must consider fetching data in batches and using pagination to limit the size of datasets retrieved. Modern interface for web apps like Kendo UI or Telerik UI for ASP.NET MVC/Core already have smart UI controls that work seamlessly with chunks of data through pagination - developers don't need to reinvent the wheel.

Cache Slow Calculations

The best way to get data more quickly is to not get it at all. Smart use of caching can radically improve your web application's performance. In ASP.NET Core, data caching comes in two flavors - in-memory caching and distributed caching.

In-memory caching stores cached data on the server. While this is the easiest cache to use, it's also limited to a single server. If you are running multiple servers, each will have a different cache.

Distributed cache uses a data store to keep the cached data. You can run as many servers as you want and they'll all hit the same data cache. The two most common data stores for distributed caching are SQL server and REDIS - both fairly easy to set up.

Because it's so effective, some developers will want to cache everything - this can be counterproductive. Caching adds complexity to your app and the performance boost isn't always worth it. Developers should limit caching to things that are hard to calculate or slow to change. Another technique is to try keeping all data caching logic in a single layer in your app - throwing cache commands all over the place can make it hard to track down potential errors.

Crush it with Response Compression

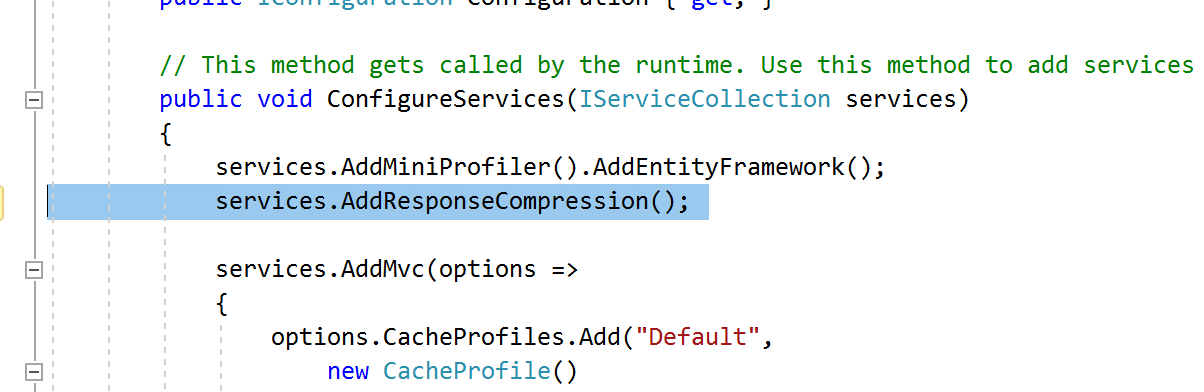

When improving the performance of your web applications, it's important to minimize the amount of data over the wire. This is especially true for folks using your web app from their phones. One way to send fewer bits is to enable response compression. By default, ASP.NET Core will GZIP several different types of requests. However, it will not GZIP commonly used responses, including CSS, JavaScript, and JSON. Before ASP.NET Core, you used to have to write custom middleware to compress those types of responses. In ASP.NET Core, the middleware is built in... all you need to do is add it to your Startup.cs file, like so:

Don't Create a Logjam

While most logging frameworks keep their performance cost to a minimum, logging still has a cost. Excessive logging can slow down your application during high load times. When in Production, developers should be logging only what's necessary to support monitoring and routine troubleshooting. You should save verbose logging for lower environments where you can afford the performance cost. Also, logging levels are best kept configurable - if you need to temporarily turn up your logging to gather data for an issue, you should be able make that happen without needing to recompile your app.

Ace Your Async Requests

Making use of asynchronous programming with C# Async/Await is a fantastic way to improve the performance of your application, but you need to be aware that these keywords will not run commands in parallel. Await stops the execution of your code - so if you have multiple async requests that are awaiting, your individual performance will be the same as if you ran those requests consecutively.

This doesn't mean you shouldn't async what you can, however. Async still improves the performance of your application, but only under load. By reducing the number of threads waiting around, async/await increases the throughput of your application.

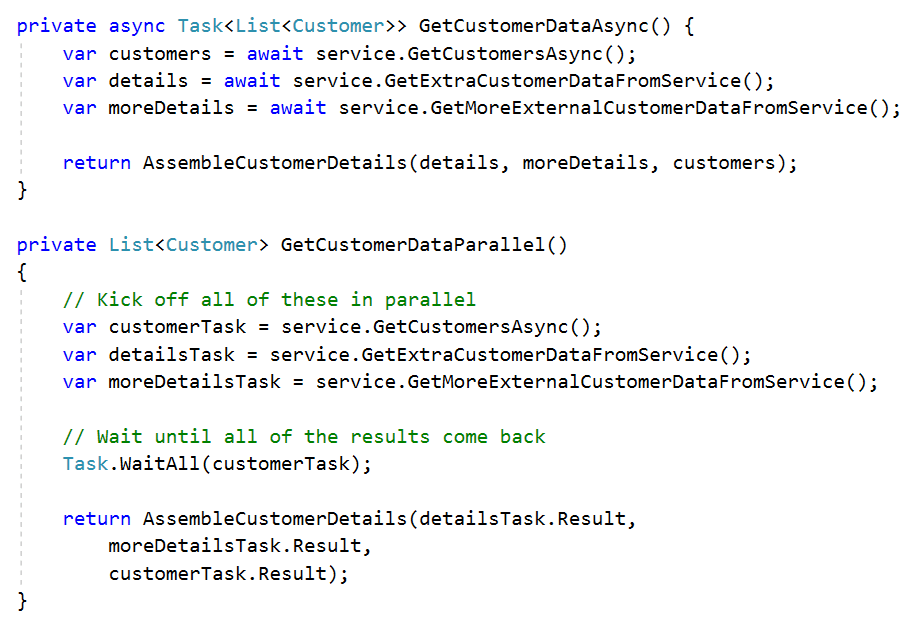

If you want to improve individual performance, you need to make use of the Task Parallel Library (TPL). By managing your own tasks, you can execute things in parallel. If you have several slow calls to make for a method, like calling an external service, you should consider running them in parallel instead of using async/await.

The following example illustrates the two methods. The top method uses the standard async/await flow and the bottom method uses task objects. Running the tasks in parallel uses more code, but running several slow requests in parallel will save time.

Make Your Apps Faster Today

Building performant web applications is a tough job, but ASP.NET Core has lots of tools and features that will help. Developers should begin by measuring performance with tools like MiniProfiler and Application Insights. Then, as you find bottlenecks, go through the checklist to see which interventions help your app. Performance tuning takes patience and experimentation - hopefully, with frontend and backend processes streamlined, you can turn the right knobs to see performance improvements. Performant web apps mean delighted users and elevated engagement - cheers to that.

Dustin Ewers

Dustin Ewers is a software developer hailing from Southern Wisconsin. He helps people build better software. Dustin has been building software for over 10 years, specializing in Microsoft technologies. He is an active member of the technical community, speaking at user groups and conferences in and around Wisconsin. He writes about technology at https://www.dustinewers.com/.