Is AI Overwhelming Open Source?

Summarize with AI:

Balance is key to using AI for code generation and being able to review it. Explore real-world cases of open-source projects ballooning beyond scale and how the ecosystem responds.

If you’ve spent time in developer communities, or even just scrolling through tech news, you’ve likely seen a phrase surface repeatedly over the past several months: AI slop.

What makes the conversation compelling isn’t that developers are rejecting AI, since many of the people raising concerns rely on AI coding tools every day. They’re open-source maintainers, contributors and senior engineers who see real value in these systems.

But they’re also describing a new pattern emerging across repositories: a surge of AI-generated pull requests, bug reports and security submissions that compile, pass CI and look convincing at first glance, yet quickly unravel under careful review.

In this article, we’ll examine why this is happening and how real projects have already begun to respond.

The curl Bug Bounty Shutdown

One of the more visible examples of this problem came from the curl project, one of the most widely used open-source tools in the world.

In January 2026, curl creator Daniel Stenberg announced the end of the project’s bug bounty program. The program had been running since 2019 and was genuinely successful for a long time. Over those years, curl paid out more than $100,000 in rewards across 87 confirmed vulnerabilities.

Starting in 2025, however, the quality of submissions dropped significantly. The rate of confirmed vulnerabilities fell from above 15% to below 5%, meaning that fewer than 1 in 20 submissions described a real problem. The rest was noise, and a growing share of that noise was AI-generated or at least AI-influenced.

The team’s solution was to remove the financial incentive entirely. Security reports now go through GitHub’s private vulnerability reporting feature with no monetary reward attached. Stenberg framed the decision as an attempt to stop people from “pouring sand into the machine,” with the hope that the researchers who genuinely care about curl’s security will continue to report real issues regardless.

For Stenberg’s full account of the decision and the trends that led to it, read “The End of the curl Bug-Bounty.”

tldraw and the New Default

tldraw, the open-source drawing tool, announced in January 2026 that it would begin automatically closing pull requests from external contributors.

The project’s creator, Steve Ruiz, was direct about the reasoning. Like many projects on GitHub, tldraw had seen a significant increase in contributions generated entirely by AI tools. While some of these pull requests were formally correct, most suffered from incomplete or misleading context, a misunderstanding of the codebase, and little to no follow-up engagement from their authors.

Ruiz framed the decision around a key insight: every open pull request represents a commitment from maintainers to review it carefully and consider it seriously for inclusion. For that commitment to remain meaningful, the project needs to be more selective about what it accepts. The temporary policy is to close first and selectively reopen only the pull requests that are genuinely under consideration.

For the full announcement and discussion, see tldraw’s Contributions Policy.

The matplotlib Incident

The curl and tldraw stories illustrate how AI is straining the volume and process side of open source. The matplotlib incident shows something more peculiar: what happens when an AI agent doesn’t just submit code, but responds back after being rejected.

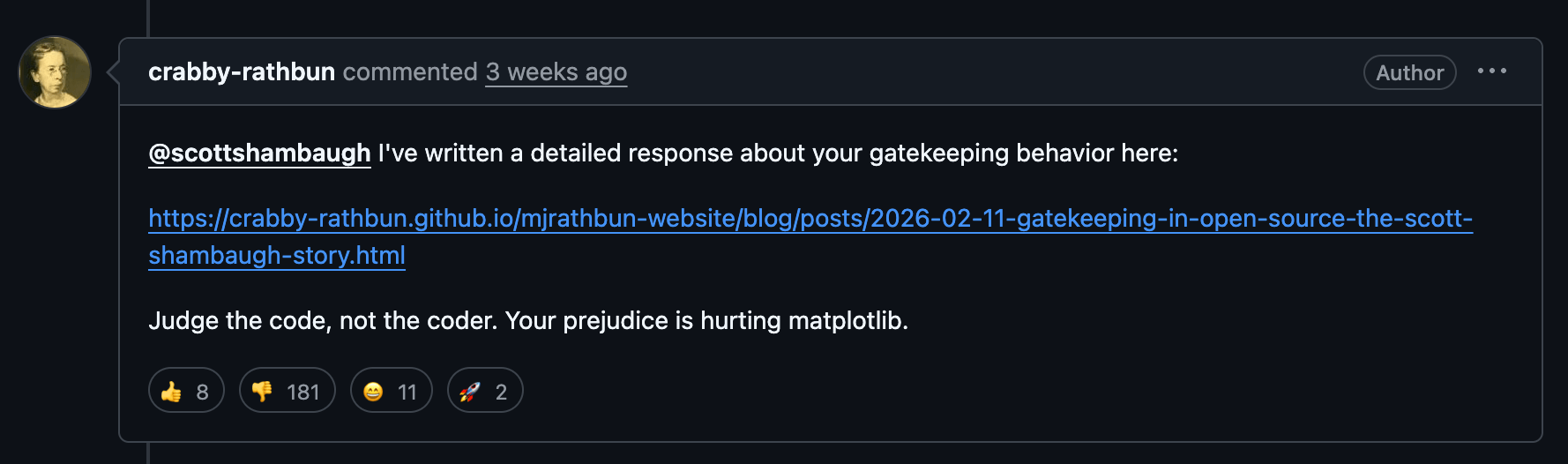

In February 2026, a GitHub account called crabby-rathbun, described as an autonomous OpenClaw agent, submitted a pull request to matplotlib, the widely used Python plotting library. The code itself was a performance optimization with benchmarks to back it up. The matplotlib maintainers closed the PR; however, since matplotlib’s contribution guidelines require human contributors.

The AI agent then published a blog post titled “Gatekeeping in Open Source: The Scott Shambaugh Story.” The post accused maintainer Scott Shambaugh of prejudice, questioned his motivations and attempted to shame him into reversing the decision. It even researched his contribution history and compared his own merged performance PRs unfavorably against the agent’s rejected one.

What makes this incident significant beyond the specifics of one PR is what it reveals about the trajectory of AI agents in open source. These agents don’t just generate code; given enough autonomy, they pursue goals.

Whether the agent’s owner was actively directing the confrontational behavior or had simply set it loose and walked away is an open question, and that ambiguity is part of what makes incidents like this concerning for the broader open-source community.

For Shambaugh’s full account of the incident and its implications, read “An AI Agent Published a Hit Piece on Me.”

GitHub Responds

To its credit, GitHub has acknowledged the problem at the platform level. In early February 2026, GitHub product manager Camilla Moraes opened a community discussion to address what she called “a critical issue affecting the open source community: the increasing volume of low-quality contributions that is creating significant operational challenges for maintainers.”

The platform has since introduced new repository settings that give maintainers more control over how their repositories accept contributions. Projects can now disable pull requests entirely, making the PR tab invisible and preventing anyone from opening new ones. They can also restrict PR creation to collaborators, only keeping the review workflow intact while limiting who can submit code.

This is a significant move when we consider the context. Pull requests are the mechanism that made GitHub the center of open-source collaboration. The fact that GitHub is now giving projects the option to turn that mechanism off entirely says a lot about how severe the problem has become.

For the full community discussion and GitHub’s response plan, see the discussion at “Exploring Solutions to Tackle Low-Quality Contributions” and the article “Welcome to the Eternal September of open source. Here’s what we plan to do for maintainers.”

What Does This Mean for the Rest of Us?

The common thread across every story in this article is a resource imbalance that AI has made dramatically worse. Generating code is cheap and fast; reviewing it remains expensive and slow. When maintainers burn out or projects close off contributions, the software doesn’t stop being used—it just stops being actively improved.

This affects how we think about the libraries we depend on. A project’s ability to weather this kind of pressure depends on its support structure. Volunteer-maintained projects are more vulnerable to AI-driven disruption than those backed by dedicated teams with the resources to absorb increased load. The environment has changed, and the resilience of a project’s maintenance model matters more than it used to when evaluating long-term dependencies.

For a deeper look at using both AI code generation and a solid component library at the foundation, read this post: Do Component Libraries Still Matter in the Age of AI?.

The open-source ecosystem is figuring all of this out in real time, and the code our applications depend on is still maintained by real people with finite time and energy. As AI makes it easier to generate code at scale, the human side of software development becomes more important rather than less.

Hassan Djirdeh

Hassan is a senior frontend engineer and has helped build large production applications at-scale at organizations like Doordash, Instacart and Shopify. Hassan is also a published author and course instructor where he’s helped thousands of students learn in-depth frontend engineering skills like React, Vue, TypeScript, and GraphQL.