The AI Observability Platform Built for Production Agents

See exactly how your agents reason, call tools and behave in production, so you can understand what’s happening and why. Debug failures in minutes, cut token waste and ship with confidence - with first-class support for .NET, Python, and JavaScript.

The Problem: Why AI Agents Are Hard to Debug

Debugging AI agents isn’t like debugging traditional software. Their behavior emerges across prompts, models, tools and state rather than a single, deterministic code path.

No Breakpoints

You can’t step through an agent’s reasoning the way you step through code.

No Clear Root Cause

Failures and hallucinations span prompts, tools, retrieval and models with no stack trace to follow.

Non-Deterministic Behavior

The same input can produce different outputs, making bugs hard to reproduce and isolate.

Expensive Debugging

Every investigation consumes tokens, API calls, and developer time.

No Shared View

In production, teams lack a common interface for understanding what agents are doing and why.

AI Observability for Agents in Production

The Progress AI Observability Platform is a developer-first platform that gives you visibility into how AI agents behave across models, tools and sessions, so you can see issues as they happen and understand their impact. One place to debug, optimize, monitor, validate and collaborate.

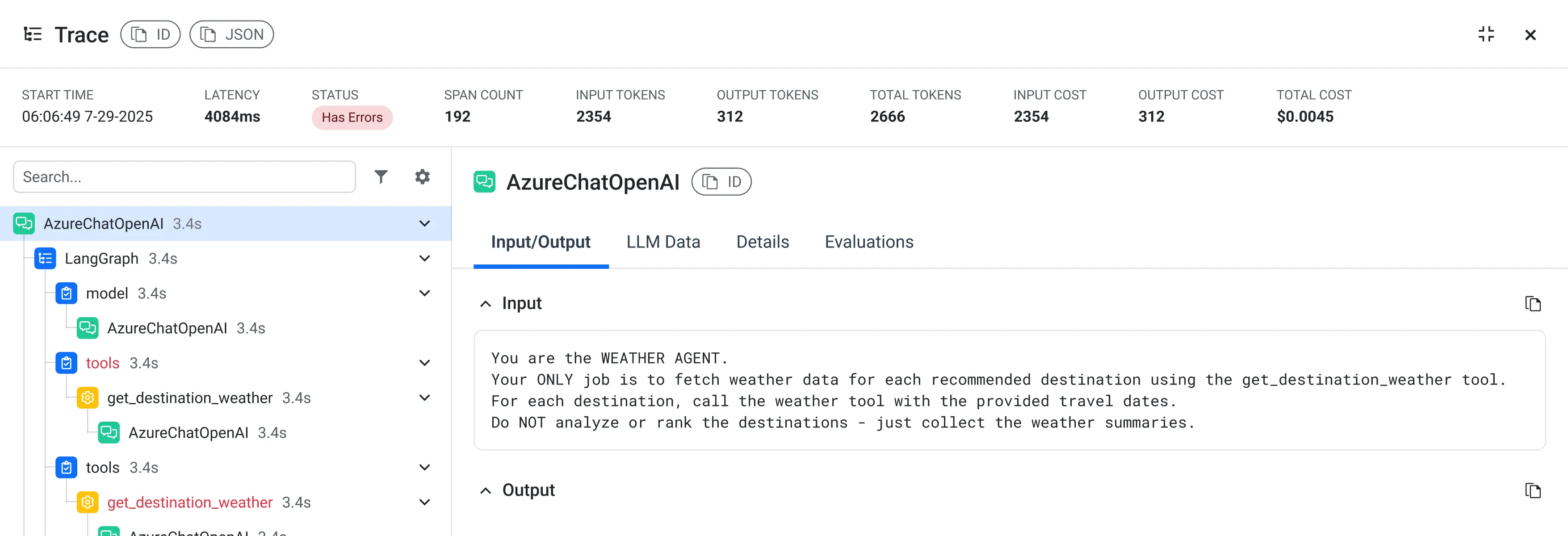

Capture every step of agent execution from prompts and reasoning chains to tool calls, retrieval and model responses. Visualize how decisions unfold across multi-step and multi-agent workflows. Understand not just what an agent returned, but why.

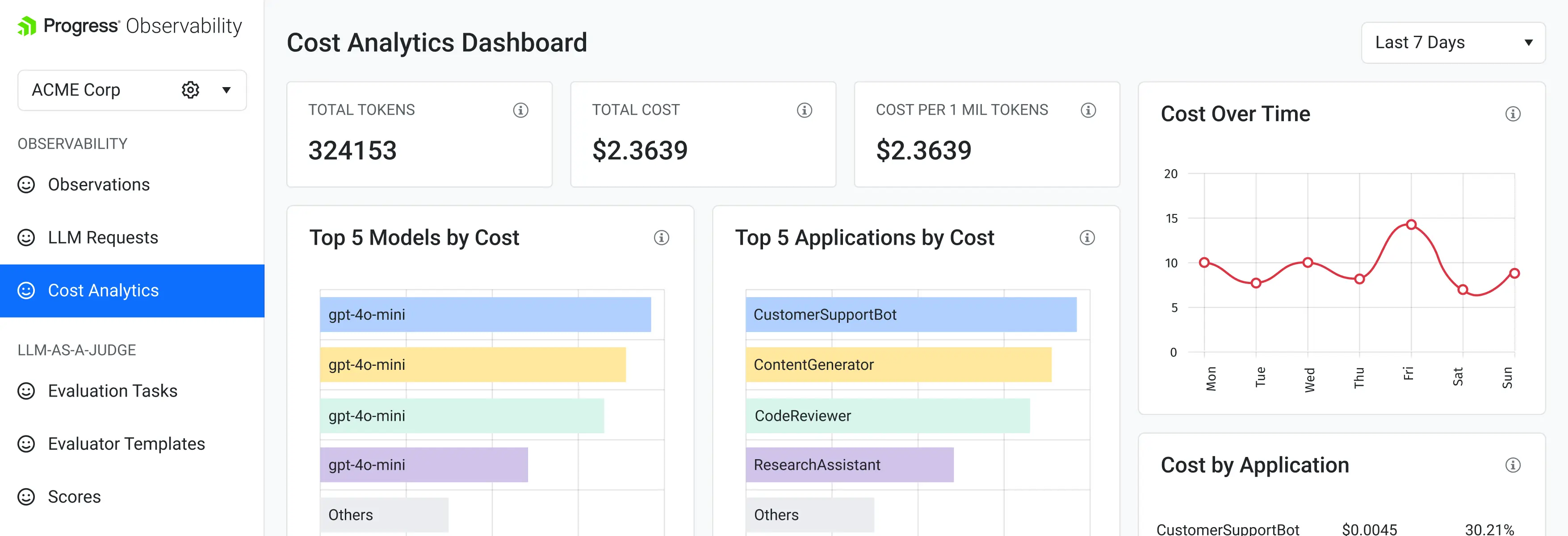

Track token consumption, response times and cost per model, per agent, per workflow. See exactly where your LLM spend goes and identify inefficiencies before they scale.

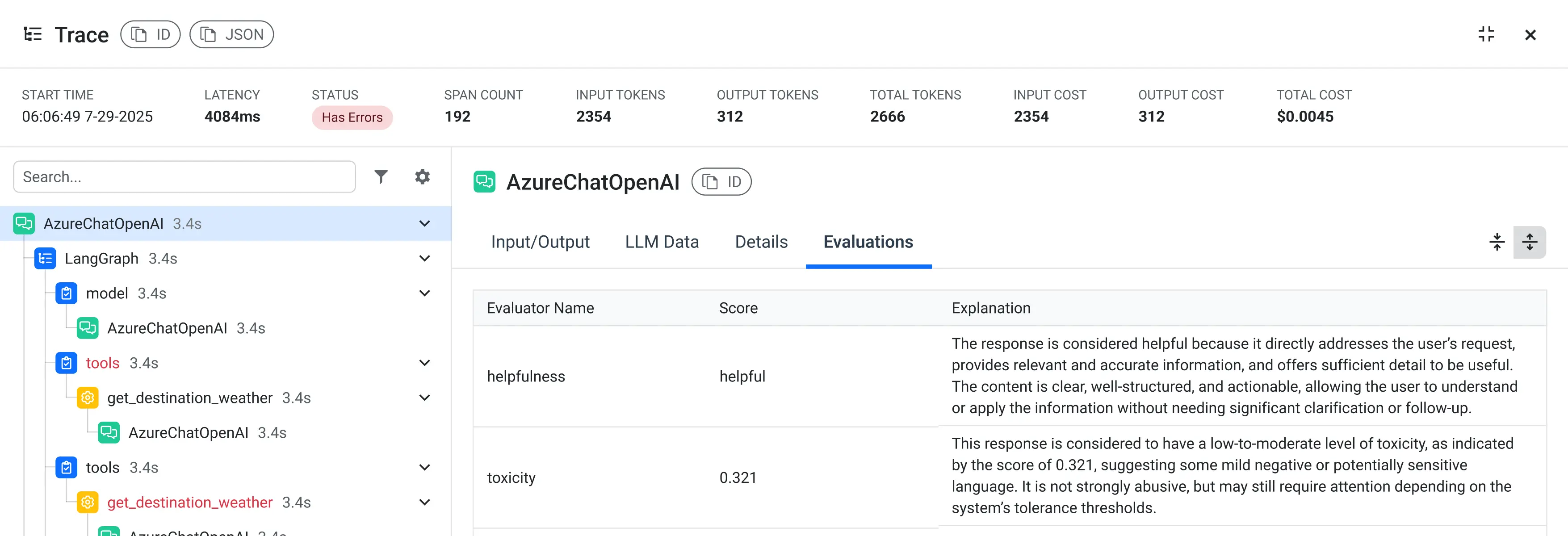

Run LLM-as-a-judge evaluations on captured traces. Score quality, usefulness and policy alignment. Compare prompt, model or workflow changes side by side using real execution data.

Three Steps to Observable Agents

The platform fits into your existing agent workflows. Capture execution data as your agents run, then turn that data into clear, actionable insight.

Instrument your AI agents with lightweight integrations that capture prompts, model calls, todiv usage, retrieval steps and state.

Observe agent behavior end to end using session- and trace-level views designed specifically for multi-step and multi-agent workflows.

Improve reliability, performance, and cost by debugging failures, running evaluations and tuning orchestration and model choices using real production data.

Get Started in Minutes

// .NET - Install & Instrument

// 1. Install

dotnet add package Progress.Observability.Instrumentation

// 2. Instrument

chatClient = chatClient.AddObservability(options =>

{

options.AppName = Environment.GetEnvironmentVariable("OBSERVABILITY_APP_NAME")!;

options.ApiKey = Environment.GetEnvironmentVariable("OBSERVABILITY_API_KEY")!;

});# Python - Install & Instrument

# 1. Install

pip install progress-observability

# 2. Instrument

from progress_observability import Observability; import os

Observability.instrument(

app_name=os.getenv("OBSERVABILITY_APP_NAME"),

api_key=os.getenv("OBSERVABILITY_API_KEY")

)// TypeScript - Install & Instrument

// 1. Install

npm install progress-observability

// 2. Instrument

import { Observability } from 'progress-observability';

Observability.instrument({

appName: process.env.OBSERVABILITY_APP_NAME,

apiKey: process.env.OBSERVABILITY_API_KEY

});What the AI Observability Platform Helps You Do

The Progress AI Observability Platform gives developers the visibility and control they need to operate AI agents reliably in production. Diagnose issues faster, prevent outages before they happen and reduce the cost of critical downtime, often by half.

“We cut our agent debugging time from 4 hours to 20 minutes. Being able to see the full trace - prompts, retrieval, tool calls - in one view changed how our team works.”

Early Access Program participant

Built for Real-World Agent Workloads

Empower teams to debug failures, improve reliability and control cost across real-world agent workloads.

Debugging Hallucinations and Failures

- Trace agent responses back through retrieval, tool calls and model decisions to understand where incorrect behavior originates.

- Identify whether hallucinations are caused by missing context, failed retrieval, tool errors or model limitations.

- Move from reactive debugging to repeatable diagnosis using real execution data.

Multi-Agent and Tool-Driven Workflows

- Visualize how multiple agents interact, coordinate tasks, and depend on shared tools or state.

- Detect loops, timeouts and cascading failures across agent boundaries.

- Understand how decisions made by one agent affect downstream behavior in the system.

Controlling Cost as Usage Scales

- Track token usage and latency across agents, workflows and environments.

- Identify inefficiencies caused by unnecessary calls, poor orchestration or suboptimal model selection.

- Optimize spend as adoption grows without sacrificing reliability or output quality.

Who It is For

From debugging to governance, built around real AI workflows.

For Developers

Debug Agent Failures in Minutes, Not Days

See exactly where and why an agent failed with step-by-step traces across prompts, retrieval, tools and model calls, so you can move from symptom to root cause without guesswork.

For Engineering Leaders

Make AI Spend Predictable as Usage Scales

Understand where tokens, latency and compute are going across agents and workflows, so you can optimize cost without slowing teams down or compromising quality.

For Enterprises

Ship AI Systems You Can Trust and Audit

Maintain visibility, control, and auditability across AI workflows with enterprise-grade security, access controls and compliance-ready observability.

Built for Developers

We designed our product to fit naturally into how developers build, test and run AI agents today.

- Lightweight setup without re-architecting your workflows

- Agent-native traces, sessions and environments

- Works with your existing models, tools and frameworks

- Native SDKs designed for Python, JavaScript and .NET developers

- Clear documentation with real-world examples

- One workflow for development and production debugging

Pricing

Simple, predictable pricing. Start free, scale as you grow.

No surprises, no hidden fees.

Free To check things out

20K units / month

1 Seat

7 Days Data Retention

Community Support

- Agent Trace Explorer

- Cost Analytics Dashboard

- LLM-as-Judge Evaluations (basic)

- .NET / Python / TypeScript SDKs

CustomTo get it into production

Starting from 100K units / month

Unlimited Seats

Custom Data Retention

Dedicated Support

- Everything in Free

- Datasets & Experiment-Based Evaluation

- Real-Time & Historical LLM-as-Judge Evaluations

- Full Cost Attribution (per-agent, per-model, total costs)

- Anomaly Detection & Alerting

Works with Your Stack

The Progress AI Observability Platform integrates with the tools, frameworks and platforms teams already use to build and run AI agents.

- Languages & SDKs: .NET (C#), Python, JavaScript/TypeScript

- Agent Frameworks: Semantic Kernel, LangChain, LlamaIndex, AutoGen, Microsoft Agent Framework

- LLM Providers: Azure OpenAI, OpenAI, Anthropic

- AI Tooling: Microsoft.Extensions.AI, Microsoft AI Foundry, Progress RAG

- Enterprise SSO: Okta, Azure AD, SAML

- Open‑Source Models (OSS): Llama 2/3, Mistral, Mixtral, Falcon, Gemma, etc.

Frequently Asked Questions

The most common questions teams ask when evaluating AI observability for production agents.

Ready to See What Your AI Agents Are Actually Doing?

Get end-to-end visibility into your AI agents in minutes. Free to start, built to scale.