How We Test Software: Chapter Three Part II—Telerik Business Services

Summarize with AI:

Have you wondered how the teams working on Telerik products test software? We continue with the next chapter in our detailed guide, give you deeper insight into the processes of our Business Services. You can start back at Chapter One here.

An Introduction

You’re reading the fourth post in a series that’s intended to give you a behind-the-scenes look at how the Telerik teams, which are invested in Agile, DevOps and TDD development practices, go about ensuring product quality.We’ll share insights around the practices and tools our product development and web presence teams employ to confirm we ship high quality software. We’ll go into detail so that you can learn not only the technical details of what we do but why we do them. This will give you the tools to become testing experts.

Important note: After its completion, this series will be gathered up, updated/polished and published as an eBook. Interested? Register to receive the eBook by sending us an email at telerik.testing@telerik.com.

Register for the eBook Now

Chapter Three, Part Two: Telerik Business Services—Guarding Business Success 24/7

Incident Management

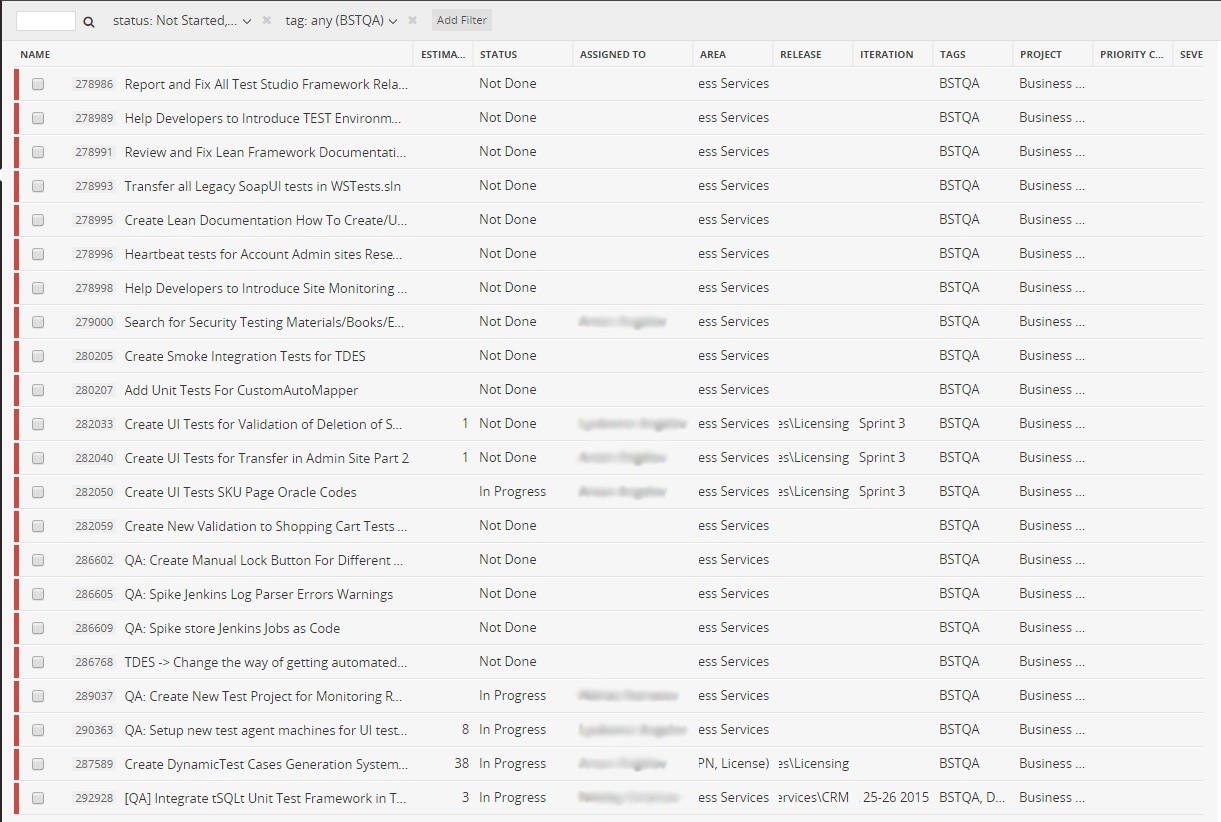

All work items in Business Services, including bugs, user stories, tasks and more, are tracked and managed in Telerik TeamPulse. A usual practice among some teams is that estimations of QA work influence the planning process. We have created a TeamPulse

view with all internal QA tasks, on which any QA can begin work, when able:

A lot of useful information is shared as articles on the portal. One of the most useful is the “How to test” section, in which we share many details about how we test various critical functionalities. There is a direct integration between Test Cases Management storage and the portal, which enables us to view detailed reports regarding the Business Services test cases.

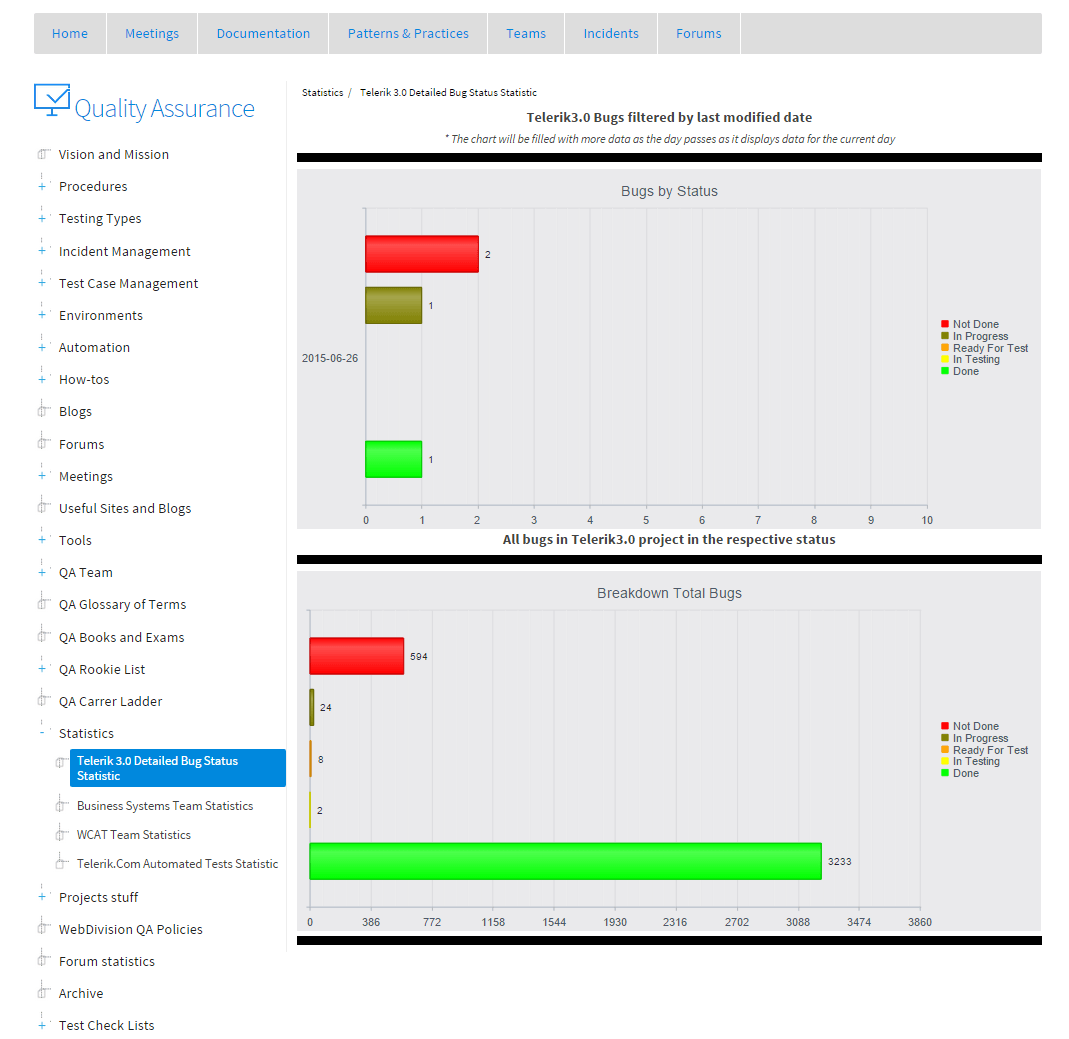

There is also a similar integration with the Incident Management System in TeamPulse:

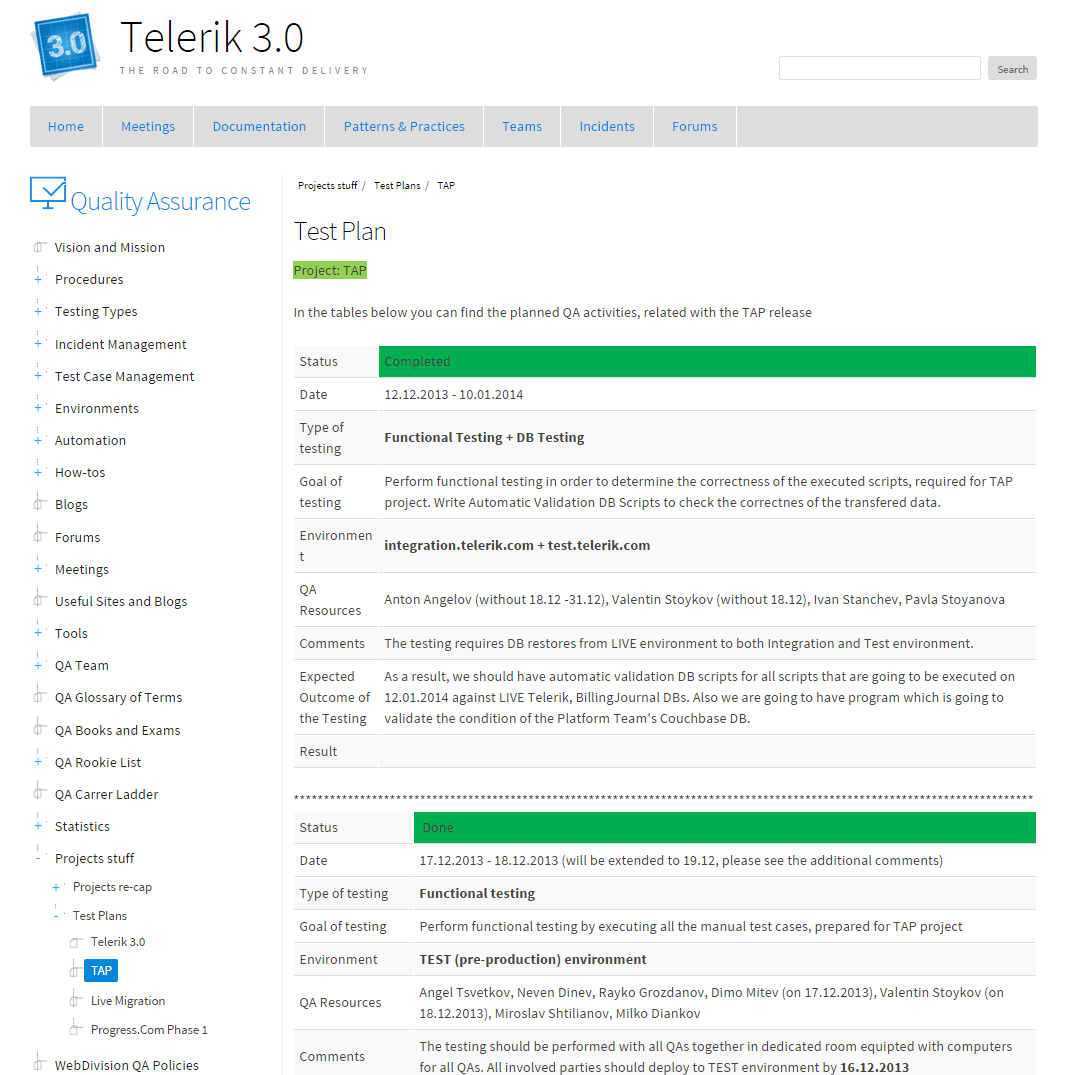

For the most complicated and critical projects, we create test plans and publish them to the portal, so they are visible to all stakeholders:

Areas of Testing

Performance and Load Testing

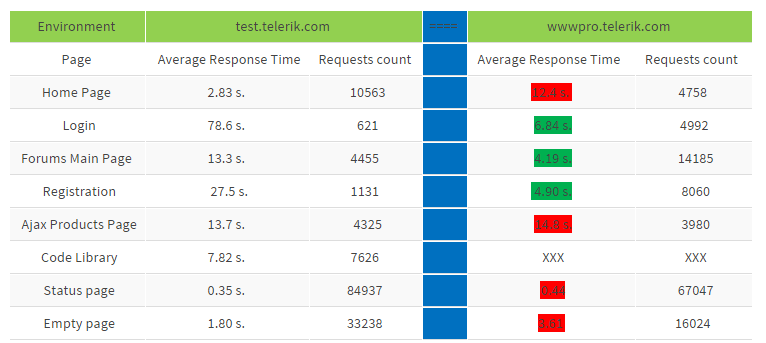

For performance and load tests, we use Visual Studio Web Performance and Load Tests. We have a dedicated, isolated environment in which we execute these type of tests, as well as dedicated machines for the web server, DB server, test

controller and test agents. We monitor and save the machines statistics if a detailed analysis is needed to find memory or CPU leaks. Additionally, we have detailed baseline statistics for the most relevant pages.

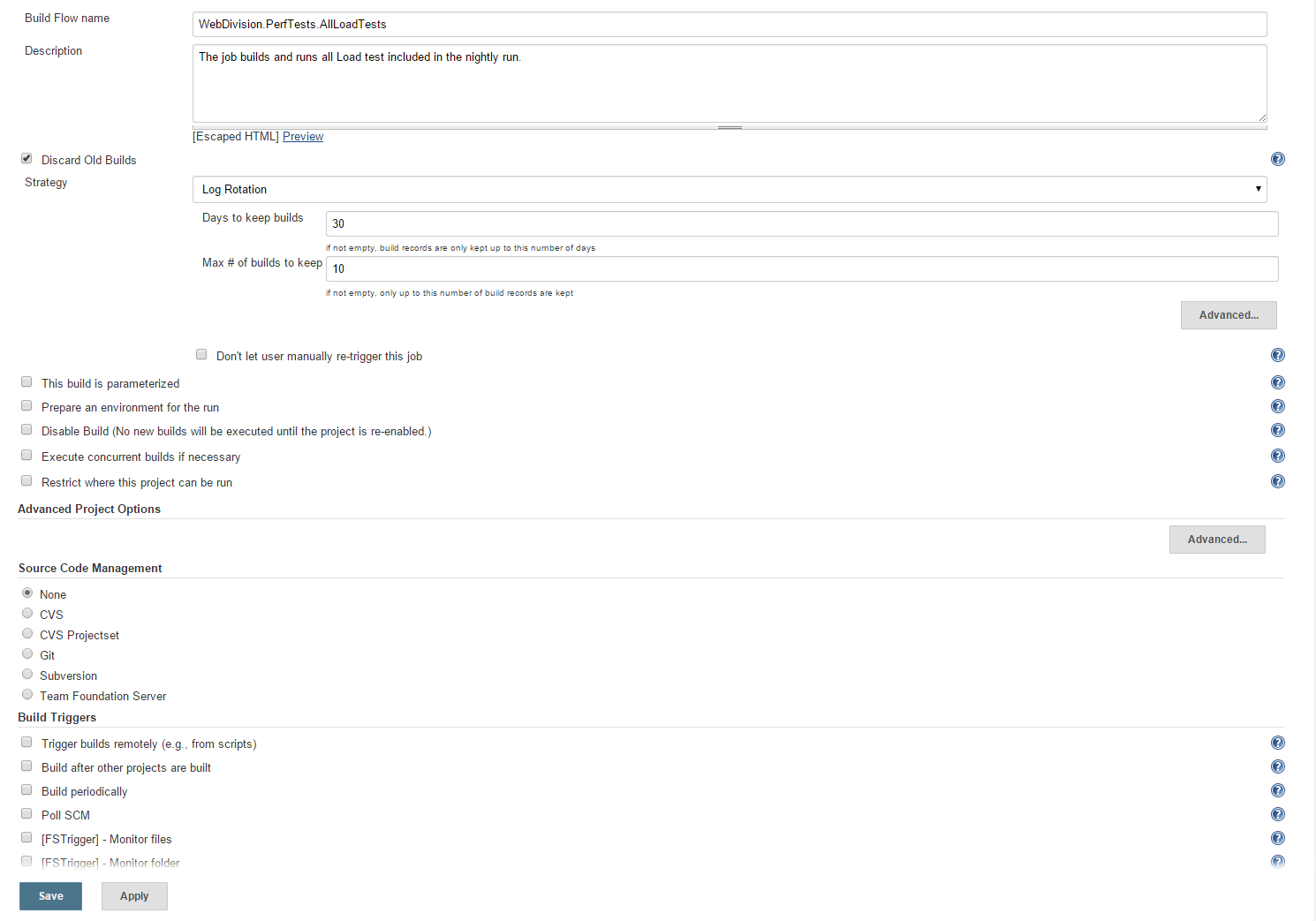

The tests are executed on a nightly basis through a specific Jenkins build:

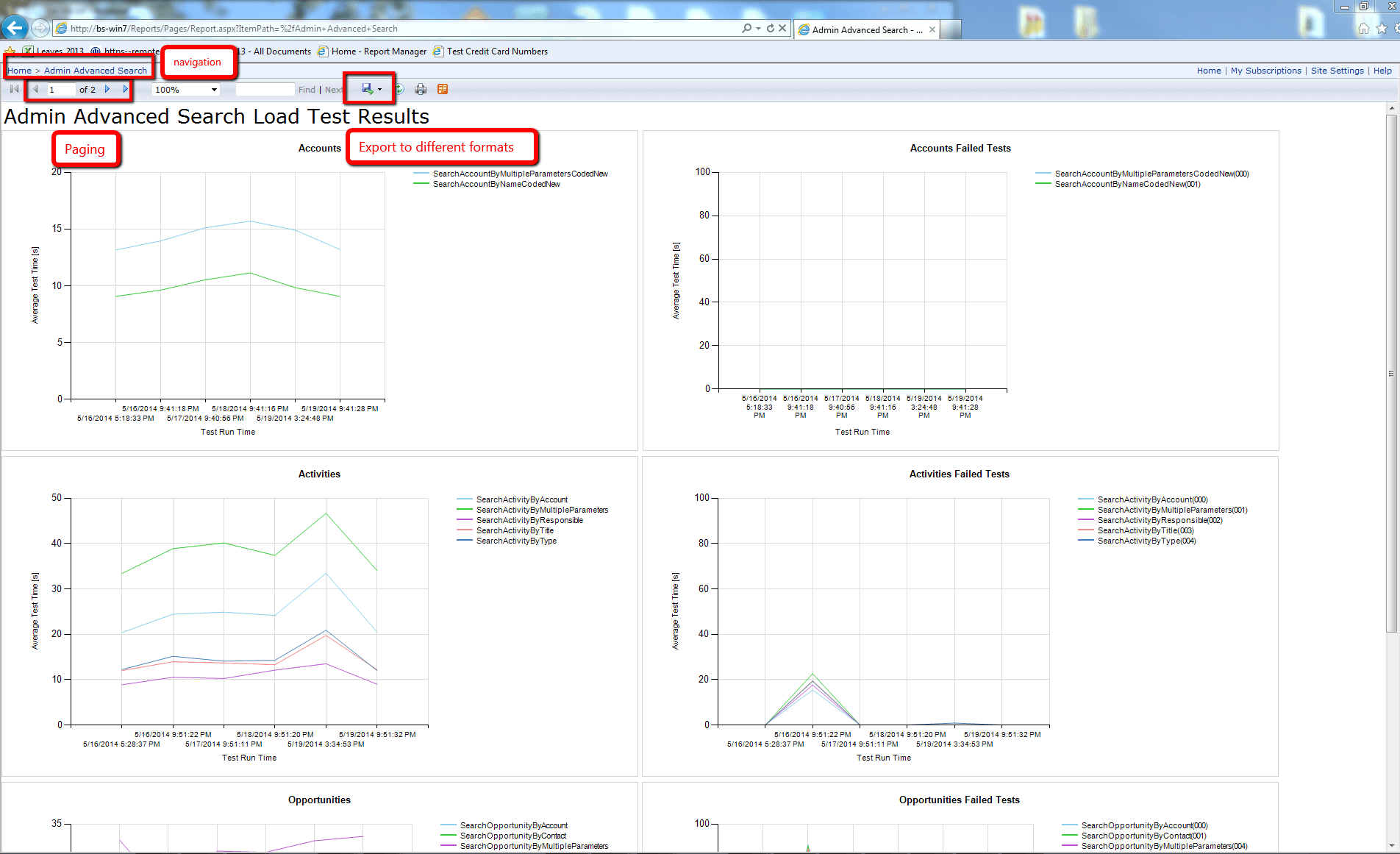

For results visualization, we use SQL Server Reporting Services (SSRS). The web interface enables us to create generic reports.

Web Service Testing

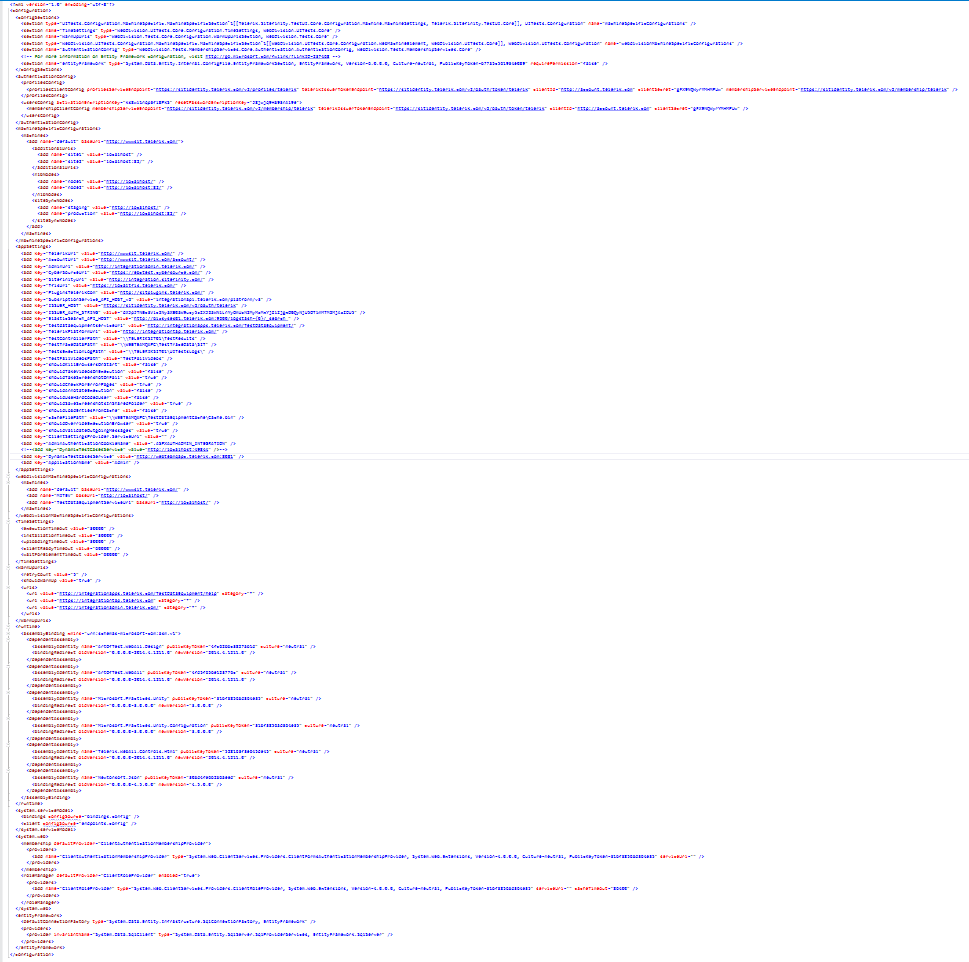

For web service testing, we use MSTest Framework. One of the projects contains auto-generated code that handles the web services invocation (there is a single class for each service). When we update a Web Service definition, we also update the references by running the BAT file, located in Services folder. Running the BAT file overwrites auto generated classes.

The svcutil.exe tool should be used. It is used internally by .NET when you create a service reference to automatically generate a file for each service.

The .bat file looks like this:

SET svcPath="%programfiles(x86)%\Microsoft SDKs\Windows\v8.0A\bin\NETFX 4.0 Tools\svcutil.exe"

Another project holds all tests related to the generated service wrappers. All common useful test classes are used through nuggets, shared between different types of testing projects. They include UI, System and Web/Windows service tests.

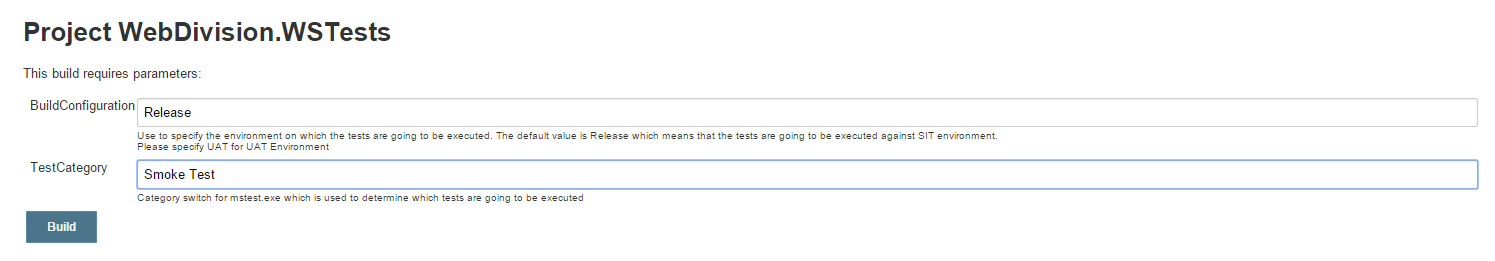

We run all web service tests on a nightly basis through a specific Jenkins build.

Heartbeat Tests

High availability is one of the most important aspects we have committed to deliver with our websites. One of our solutions is setting up heartbeat tests that will run over critical workflows—for example, Login, Download, Registration, Search and so on—continuously. This way, we have a traceable availability history and a degree of certainty about the website's overall availability. Also, we are aware of the website's current state and can resolve issues as soon as they arise, to ensure the website's availability is up to our set standards.

The technologies we use are Test Studio, MSTest and Jenkins. The test runs are always performed over stable changesets, specified in the Jenkins build as a parameter.

UI/E2E/System Tests

For System/E2E tests, we use Test Studio in combination with MSTest. We have more than 40 projects with more than 45k lines of code. High-quality standards are enforced through StyleCop and code reviews. Also, we document best code practices on our QA portal. Every team works in a separate code project solution, that includes only the team’s related projects. All shared logic such as division framework, common validators, and utilities are referenced through nuggets.

We use several design patterns in these types of tests, including Singleton, Page Object, Façade, Observer and more.

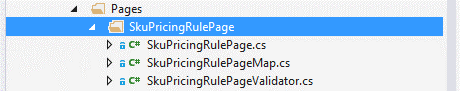

Probably the most important is the Page Object Pattern. Every page is divided into three classes:

- Page Object Element Map: Contains all element properties and their location logic.

- Page Object Validator: Consists of the validations to be performed on the page.

- Page Object (SKUPricingRulePage): Holds actions that can be performed on the page such as Search and Navigate. This pattern exposes an easy access to the Page Validator through the Validate() method. The best implementations of the pattern hide the usage of the Element Map, wrapping it through all action methods.

The most important aspects of test execution can be configured through test class-level attributes, or app.config settings.

[TestClass,ScreenshotOnFail(true),VideoRecording(VideoRecordingMode.OnlyFail),ExecutionBrowserAttribute(Browsers.Firefox]The following configuration will create a screenshot, only if some of the tests fail. Also, the recorded video of the execution is saved only for failed tests. The tests are executed on Firefox browser. Similar attributes are available on the method level, and if used, they override the class attributes.

[TestMethod,Priority(Priorities.VeryHigh),TestCategory(Categories.ContinuousIntegration),TestCategory(Categories.PurchasingCenter),TestCategory(Categories.AssetAdmin),ManualTestCase(6679),Owner(Owners.AntonAngelov),ExecutionBrowser(Browsers.Chrome)]Even more settings are exposed through the app.config:

Did you find these tips helpful? Stay tuned for the next chapter which will continue the story of Telerik Business Services and how they go about test execution and reporting.

If you are interested in trying out the latest Test Studio, feel free to go and grab your free trial.

Start My Test Studio Trial

Anton Angelov

Anton is a Quality Assurance Architect at Progress. He is passionate about automation testing and designing test harness and tools, having the best industry development practices in mind. He is an active blogger and the founder of Automate The Planet. Ardent about technologies such as C#, .NET Framework, T4, WPF, SQL Server, Selenium WebDriver and Jenkins, he won MVP status at Code Project (2016) and MVB (Most Valuable Blogger) at DZone.